In February 2026, Gartner published a prediction that should make any executive who has bet everything on automation uncomfortable: half of the companies that reduced customer service staff due to AI will rehire people for similar roles before 2027. This is not marginal speculation. It is the world's most cited analysis firm, with data from 321 customer service leaders, stating something that contradicts the dominant narrative of the last three years.

The story is deeper than it seems. It's not that artificial intelligence doesn't work. It does. The problem is that it works so well solving routine tasks that it exposes, with brutal clarity, everything it cannot solve. And that "everything it cannot solve" turns out to be exactly what defines whether a customer stays or leaves, trusts or distrusts, recommends or warns.

This is the automation paradox. And understanding it is not a philosophical exercise: it is the most important strategic decision a service company can make in 2026.

The paradox nobody anticipated

There's an idea that runs through the history of technology like an invisible thread. It was formulated by the Hungarian-British philosopher Michael Polanyi in 1966, long before anything resembling a chatbot existed: "We know more than we can tell." Polanyi was referring to tacit knowledge — that human ability to do things we cannot explain with rules. Recognizing a face among thousands. Sensing that a customer is frustrated before they say it. Knowing that this conversation needs a different tone.

For decades, Polanyi's paradox was a barrier to automation. If you can't articulate the rules, you can't program them. Rule-based chatbots and IVR systems operated precisely within that limit: they did what you could specify, and nothing more.

But generative artificial intelligence changed the equation. Language models don't need explicit rules: they learn patterns of human behavior from millions of examples. A study by Stanford University and MIT, published in the NBER, showed that an AI tool trained with the transcripts of a contact center's best agents managed to transfer that tacit knowledge to the rest of the team. The result: a productivity increase of 14% on average, and 34% for novice agents. Agents with two months of experience performed at the level of those with six months, without the tool.

And here the paradox emerges. AI solves operational tacit knowledge — the how to respond, the what information to give, the when to escalate. But by solving that, it reveals a deeper layer of tacit knowledge that remains exclusively human: judgment, contextual empathy, the ability to read between the lines. The more technically competent the machine becomes, the more visible what it lacks becomes. And what it lacks is, paradoxically, what matters most.

The Klarna case: anatomy of an excess

If the automation paradox needed a corporate case study, Klarna provided it with unintentional generosity.

In 2024, the Swedish fintech proudly announced that its AI chatbot — developed with OpenAI — had replaced 700 customer service agents. Initial numbers seemed to validate the decision: the bot handled 75% of chats, processing 2.3 million conversations in 35 languages. The CEO, Sebastian Siemiatkowski, turned the metric into a corporate slogan.

What wasn't measured — or was measured and ignored — was what happened with the other 25%. Complex interactions. Customers with refund issues that didn't fit predefined patterns. Emotionally charged situations where the bot's speed felt like indifference. Cases where the customer needed to feel that someone understood them, not that someone processed them.

By early 2025, satisfaction metrics in complex interactions had deteriorated. Customers reported robotic responses, inflexible scripts, and what one analyst described as a "Kafkaesque loop": repeating the problem to a human after the bot failed, as if the first conversation hadn't existed.

In May 2025, Siemiatkowski publicly admitted: "We focused too much on efficiency and cost. The result was lower quality." By 2026, Klarna was re-hiring human agents under a hybrid model they called "Uber-style": remote agents with flexible hours, targeting students and parents.

Klarna's narrative arc is instructive not for its failure, but for its logic. The company did not make a technical error. It made a philosophical error: it confused the ability to process with the ability to understand. It automated the transaction, but the transaction was only the surface of what its customers needed.

What we know more than we can tell

Let's return to Polanyi, because his idea has deeper implications than the tech industry usually grants it.

When an experienced human agent speaks with an angry customer, they are doing something that no procedure manual can fully capture. They are reading micro-signals: the rhythm of words, the choice of terms, the implicit context. They are adjusting their tone in real-time, not following an "empathy" script with pre-designed phrases. They are making a decision that integrates emotional, contextual, and operational information in a way that even the agent couldn't articulate if you asked them to explain exactly what they did and why.

This is what MIT researchers call "knowledge spillover" — the overflow of tacit knowledge. What's fascinating about the Stanford-MIT study is that AI managed to capture part of that operational tacit knowledge and transfer it. But the part it captured is the most superficial layer: response patterns, successful sequences, conversation structures. The deep layer — the one that distinguishes an agent who solves from one who connects — remains opaque to the machine.

Sherry Turkle, MIT professor and author of "Alone Together", has spent two decades studying the relationship between humans and machines. Her conclusion is uncomfortable for the industry: machines can simulate intimacy, but simulation does not produce trust. It produces, at best, comfort. And at worst, what she calls "the uncanny valley of empathy" — that unsettling feeling that something pretends to understand you without actually doing so.

In customer service, this distinction is not academic. According to Harvard Business Review, customers form emotional connections with brands through three dimensions: competence, warmth, and authenticity. AI excels in competence — it is fast, accurate, available. But warmth and authenticity remain human territories. And what's relevant is that all three dimensions are necessary. Competence without warmth is cold efficiency. Warmth without competence is useless good intention. The company that achieves all three is the one that retains.

The numbers that support the paradox

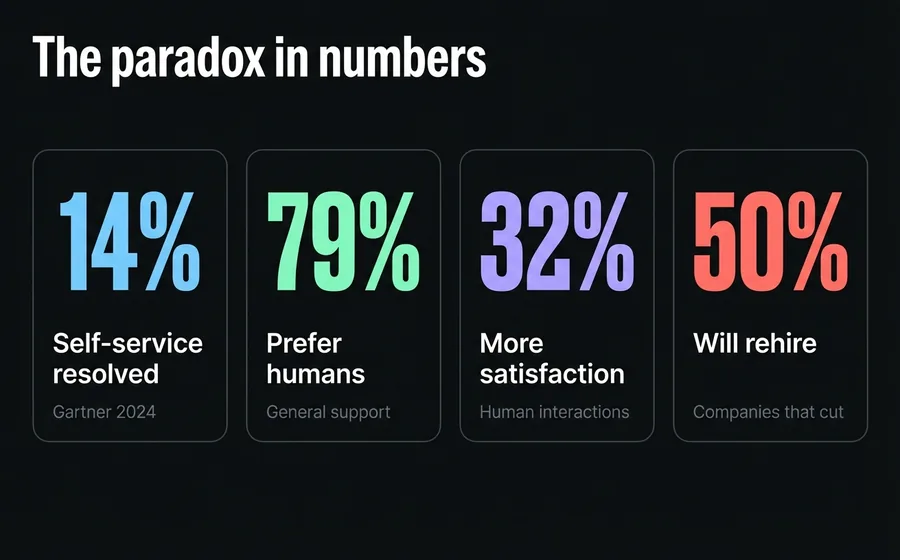

The automation paradox is not an intuition. It is a documented trend with converging data from multiple sources:

| Finding | Source | Year |

|---|---|---|

| Only 14% of service issues are fully resolved through self-service | Gartner | 2024 |

| 79% of consumers prefer to interact with a human for general support | SurveyMonkey | 2026 |

| 89% believe companies should always offer the option to speak with a person | Global CX Survey | 2025 |

| 78% say it's important to be able to escalate from an AI agent to a human one | Global CX Survey | 2025 |

| Emotionally charged interactions resolved by humans generate 32% more satisfaction | McKinsey | 2025 |

| 91% of customer service leaders feel pressure to implement AI | Gartner | 2026 |

| Only 20% of organizations effectively reduced their agent workforce thanks to AI | Gartner | 2025 |

Read together, these data tell a coherent story. There is enormous pressure to automate (91% of leaders feel it). Automation solves a fraction of the problem (14% complete resolution in self-service). Customers still want humans for what matters (79% preference). And when companies cut agents, most end up regretting it.

Perhaps the most revealing data point is the simplest: only 8% of consumers prefer AI over a human in customer service. Among that 8%, the reasons are availability (41%) and speed (37%) — operational advantages, not relational ones. Nobody says "I prefer AI because it understands me better."

The Last Human Meter

In logistics, there is the concept of the "last mile" — the final stretch of delivery that is, paradoxically, the most costly and complex of the entire chain. Getting from the distribution center to your doorstep costs more than moving the package 5,000 kilometers by plane.

In customer service, there is an equivalent that we could call the "last human meter." It is the moment when the conversation stops being an exchange of information and becomes an emotional negotiation. The customer doesn't need data. They need to feel that their problem matters. That the person (or entity) on the other side has the authority and willingness to resolve it. That it's not a case, it's a person.

This last meter is where loyalty is decided. And it is, by definition, where AI has its greatest limitation. Not because it cannot generate empathetic phrases — it can, and increasingly better — but because empathy without consequences is not empathy. It is simulation. A human can say "I will resolve this personally" and that phrase carries weight because there is a person behind it who puts their professional reputation on the line. When a bot says the same thing, it is a chain of tokens optimized to produce satisfaction. The customer knows it. Sometimes consciously, sometimes as an intuition they cannot articulate.

The irony is that the better AI becomes at handling the routine 80%, the higher the standard for the 20% that reaches a human. If the bot flawlessly resolved five simple questions, the customer expects the human agent they finally reach to be exceptional. Not just good. Exceptional. Automation does not lower the human standard. It elevates it.

Three Strategic Errors of Over-Automation

1. Confusing Resolution with Satisfaction

A ticket can be marked as "resolved" when the bot provided the correct answer. But resolution is not the same as satisfaction, and satisfaction is not the same as loyalty. According to McKinsey, interactions that generate emotional connection have a 32% higher retention impact than those that simply solve the problem. The company that only measures resolution rate is optimizing one metric while eroding a more important one.

2. Automating Before Understanding

Gartner warned in 2026 that 40% of agentic AI projects will face failure by attempting to "automate obsolete processes." You cannot automate a broken process and expect AI to fix it. If your escalation flow is confusing with humans, it will be confusing with bots. If your support categories do not reflect your customers' real problems, a bot trained with those categories will reproduce the confusion faster.

AI amplifies what already exists. If operational clarity exists, it amplifies it. If chaos exists, it amplifies that too. Before automating, the human work of understanding what works and what doesn't must be done.

3. Eliminating Relational Context

When a company completely replaces a human support team, it doesn't just eliminate agents. It eliminates the informal knowledge network those agents had built: who is the frequent customer who always has billing problems, what type of requests end in escalation, what tone works with the Chilean market versus the Argentinian. That knowledge is not in any database. It lives in the collective experience of the team. And once lost, it cannot be recovered by training a model.

The Hybrid Model: Neither All Human, Nor All Machine

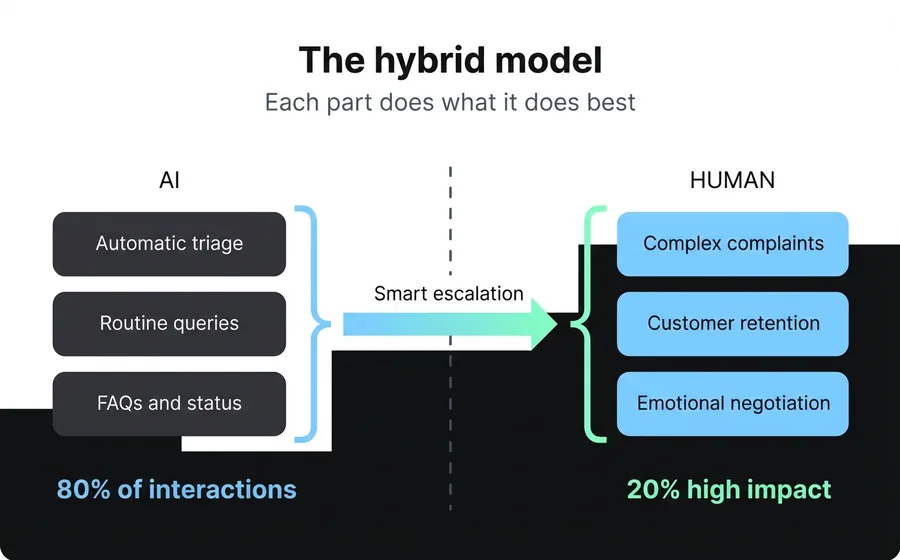

If the data converges on anything, it's this: the future is not AI versus humans. It's AI with humans, where each does what they do best.

The Stanford-MIT study offers the most concrete clue. AI as a coaching tool — not as a replacement — produced measurable improvements: 14% more productivity, 25% fewer escalations to supervisors, better employee retention. The model works because it respects the paradox: it uses AI to transfer tacit operational knowledge, but keeps the human at the center of the interaction.

Gartner projects that by 2026, 55% of customer service interactions will be of the hybrid AI-human type. Not AI alone, not human alone: hybrid. AI executes with precision, the human provides judgment. AI processes context, the human interprets emotion. AI suggests the answer, the human decides if it is the correct one for this customer, at this moment, with this history.

This model requires a mindset shift. The human agent in a hybrid system is not an operator who follows instructions. They are a relationship professional, equipped with AI tools that expand their capabilities. Their job is not to respond faster — the machine has already done that. Their job is to connect with the customer at the moment that matters, resolve what the machine cannot, and turn a problem into a loyalty opportunity.

Platforms that understand this do not design AI to replace the agent. They design it to empower them: suggesting answers, summarizing customer history, detecting conversation sentiment in real-time, and freeing them from repetitive tasks so they can dedicate their energy to relational aspects.

Implications for the next 18 months

Invisible AI will be more valuable than visible AI

The companies that will generate the most impact are not those that put a bot upfront and say "talk to our AI." They are those that integrate AI invisibly into the human agent's workflow. The customer does not need to know that the answer was suggested by a language model. They need to know that it was answered well, quickly, and thoughtfully.

Human agents will be more expensive and more valuable

If AI handles 80% of routine inquiries, the remaining 20% requires a significantly higher skill level. This means companies need to invest more in training, not less. The agent of the future is not the one who answers FAQs — that's what the bot is for. It's the one who navigates difficult conversations, retains angry customers, and turns complaints into opportunities. That profile is scarcer and more valuable.

Measurement will change

Traditional contact center metrics — average resolution time, tickets closed per hour, first contact resolution rate — are designed to measure transactional efficiency. They are relevant, but incomplete. Leading companies will also measure: customer sentiment post-interaction, retention rate linked to human vs. automated interactions, Net Promoter Score segmented by agent type (human, bot, hybrid), and customer lifetime value (CLV) by resolution channel.

The hybrid model will be the standard, not the exception

By the end of 2027, companies operating with a 100% automated or 100% human model will be the minority. The standard will be an ecosystem where AI performs triage, resolves routine issues, escalates complex ones, and humans intervene with full context and AI tools as a copilot. The platforms that do not offer this architecture will lose relevance.

Frequently asked questions

Will AI replace customer service agents?

Not in the way it was predicted. Gartner estimates that agentic AI will autonomously resolve 80% of common inquiries by 2029, but Gartner itself predicts that half of the companies that cut agents will rehire before 2027. The emerging model is one of collaboration, not replacement: AI handles routine tasks and humans focus on complex and relational aspects.

What is the automation paradox in customer service?

It is the phenomenon by which automating routine interactions does not reduce the need for humans, but rather transforms and elevates it. The more efficient AI is at resolving simple inquiries, the higher the quality standard customers expect in interactions that reach a human. Automation does not eliminate the human element; it makes it more visible and more valuable.

Why did Klarna's AI strategy fail?

Klarna eliminated approximately 700 customer service agents and replaced them with an AI chatbot in 2024. While the bot successfully processed 75% of chats, satisfaction dropped in complex interactions. CEO Sebastian Siemiatkowski admitted that the company "focused too much on efficiency and cost." By 2026, they were rehiring humans under a hybrid model. The error was not technical but strategic: they confused the ability to process with the ability to understand.

How much do consumers prefer human support over AI?

79% of consumers prefer to interact with a human for general support. Only 8% prefer AI over humans, and their reasons are operational (availability and speed), not relational. However, 51% prefer bots for immediate service on simple inquiries, which confirms that the optimal model is hybrid.

What should a company automate and what should it not?

Automate what can be resolved with information and a clear process (status inquiries, FAQs, standardized procedures). Keep humans for what requires judgment, emotional negotiation, or relational context (complaints, retention, complex sales, exceptional situations). The criterion is not 'Can AI do it?' but 'Does the customer need to feel there's a person?' If the answer is yes, AI should be the copilot, not the pilot.

Conclusion

The automation paradox is not a technical problem that can be solved with a more advanced model. It is a truth about human nature: we value what we feel is authentic, and authenticity does not scale with servers. AI is the most powerful tool to have arrived in customer service in decades. But like any powerful tool, its value is not in what it can do alone, but in how it amplifies what people do.

The companies that will lead in the coming years are not those that automate the most. They are those that automate better — freeing their human teams from repetitive tasks so they can focus on what no machine can replicate: the ability to make another human being feel that their problem matters.

That's not sentimentality. It's strategy. And the data confirms it.